Why this problem matters

Admissions teams are not just overloaded by volume. They are overloaded by inconsistent packet formats, fragmented evidence across documents, and the pressure to score fairly under seasonal deadlines.

Most schools already run checklist-driven admissions workflows. The bottleneck is what happens after a packet looks "complete": readers still spend significant time finding relevant evidence, translating it into rubric categories, and writing consistent rationale for committee review.

Without a structured first pass, schools see predictable friction:

- longer first-pass review times

- higher clarification churn before committee meetings

- score variance across reviewers on the same rubric dimensions

- weaker audit trails when decisions are challenged

What the workflow looks like

An effective rubric assistant should prepare better review inputs, not replace admissions judgment.

1) Packet ingestion and completeness gate

Application artifacts (forms, transcripts, recommendations, essays) are parsed into a normalized structure. Missing or invalid items are flagged before reader assignment.

2) Rubric evidence mapping

The assistant maps extracted snippets to school-defined rubric categories and creates evidence cards with source tracebacks.

3) Reader draft and adjudication

For each applicant, AI generates a neutral summary, open questions, and provisional rubric notes. Reviewers accept, edit, or reject each suggestion.

4) Inter-rater drift monitoring

A dashboard tracks variance by rubric category and reviewer cohort. If drift crosses threshold, admissions leadership runs a re-norming session.

5) Committee packet generation

The final dossier is standardized: rubric grid, strengths/concerns, missing-evidence flags, and discussion prompts.

Tools that fit this use case

| Component | Practical role |

|---|---|

| Enrollment/admissions system | Source-of-truth for checklist status, applicant records, and workflow transitions |

| Document extraction layer | Pulls structured entities, tables, and checkboxes from mixed packet formats |

| LLM prompt templates | Produces rubric-linked evidence cards and reviewer draft notes |

| BI dashboard | Monitors throughput, backlog aging, and inter-rater variance trends |

| Audit log store | Preserves prompt/output/edit history for governance and review |

What a realistic rollout looks like

Start in shadow mode for one cohort (for example, middle-school entry):

- AI prepares evidence cards and draft notes.

- Human readers continue full official scoring.

- The team compares baseline vs shadow metrics for speed and consistency.

- Rubric prompts are tuned before any broader rollout.

Define controls up front:

- role-based access aligned to FERPA legitimate educational interest

- mandatory source tracebacks on every AI suggestion

- confidence thresholds that force human escalation for uncertain outputs

- no autonomous final scoring actions

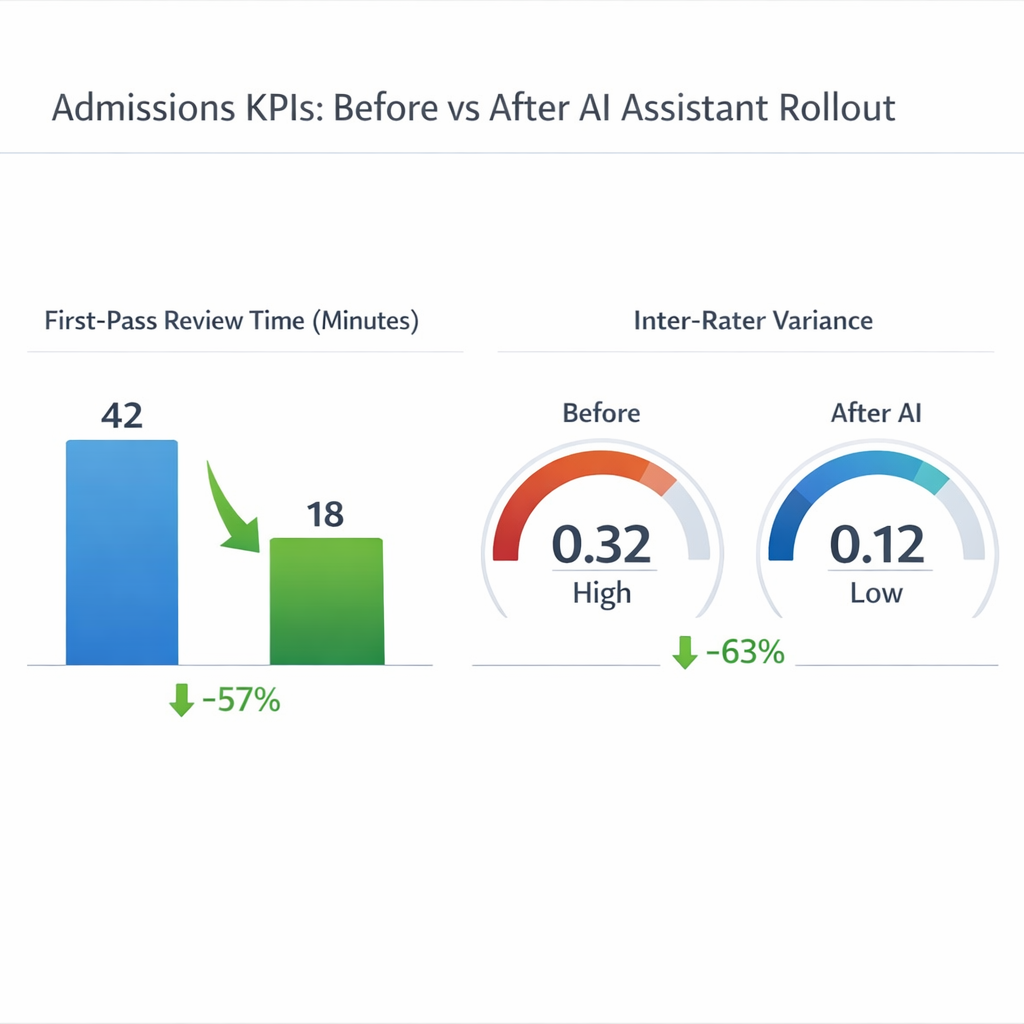

Track improvement with a simple KPI set:

- median first-pass review minutes per packet

- percentage of packets returned for missing documents pre-review

- inter-rater variance by rubric category

- committee time spent on clarification vs decision discussion

Final takeaway

A strong admissions rubric assistant does one job well: it makes reviewer judgment faster, more consistent, and more defensible. Schools that start with governed shadow mode, measurable thresholds, and strict human-in-the-loop controls can gain operational leverage without turning admissions into a black box.